Classifying hazelnuts using a Raspberry Pi

In the summer of last year, I went with my family to Piedmont, and we really enjoyed our time there with the beautiful landscapes, good weather and really good wine.

One thing the Piedmont also specializes in is hazelnuts - roasted or processed into cream, with sugar or chocolate. We visited a farm, and learned that after the hazelnuts are gathered and cleaned, they are peeled and roasted and then it's decided whether they're "Class A" quality, which can be packed sold directly, or if they're "Class B" quality, for being crushed and processed into e.g. hazelnut spread. This is mainly a "cosmetic" matter - almost all "foul" nuts are already sorted out, but after peeling, only the ones with no remaining dark spots are qualified to be sold directly.

All of the sorting is still done by hand (at least at the place we were), which takes a lot of time and is also a very tedious thing to do. Usually two people sit before a conveyor band and try to spot hazelnuts which are not class A quality.

Photos from cascinascavin.com

Photos from cascinascavin.com

Can we detect if a hazelnut is Class A quality with a simple Raspberry Pi with camera module?

Setup

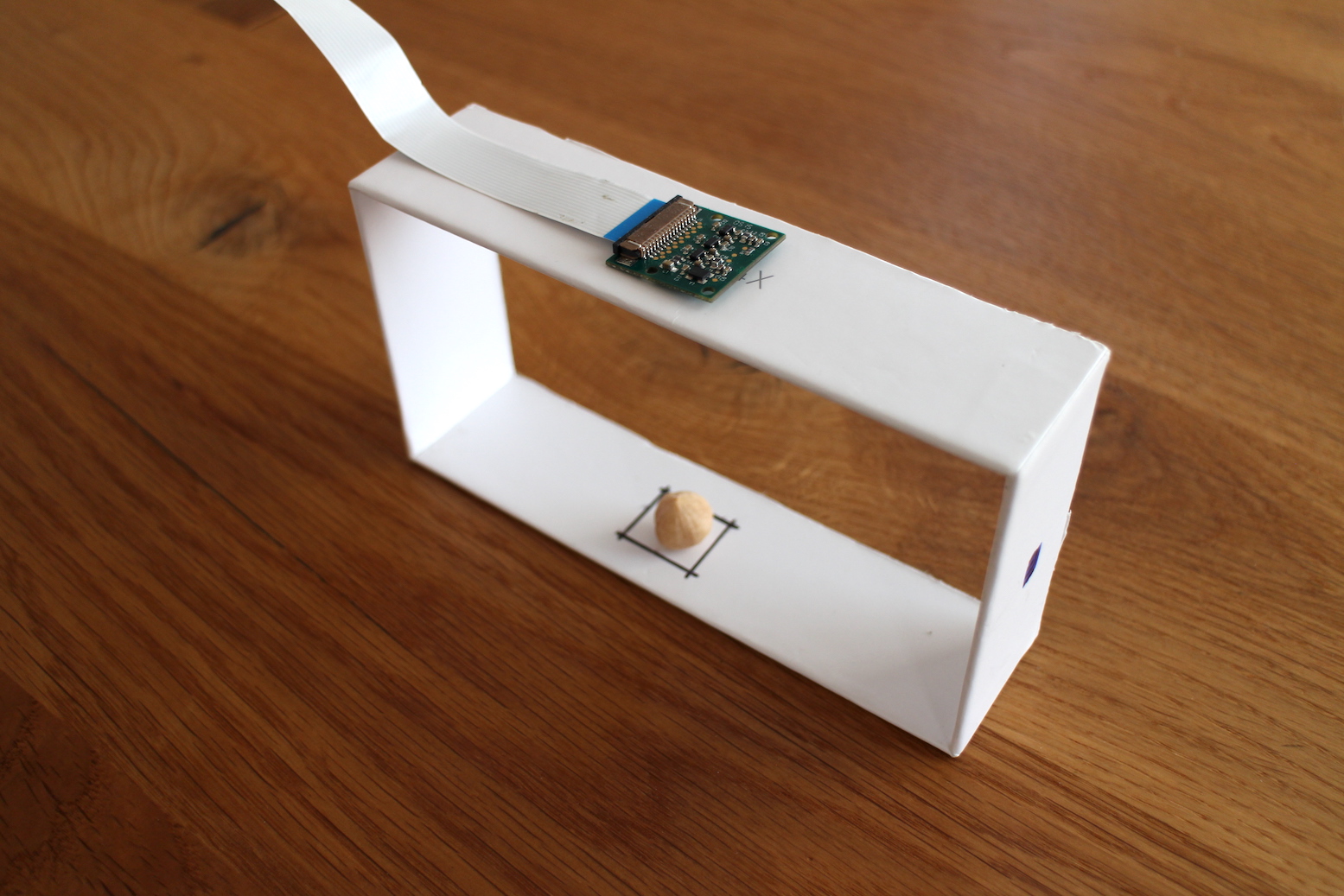

I used an old Raspberry Pi with the Camera module, which provides a 1.3MP photo.

I then carved out a simple frame out of cardboard to mount the camera and drew a square where the hazelnut should be placed:

Taking a picture is really simple:

from picamera import PiCamera

camera = PiCamera()

camera.capture('./img/test.jpg')For immediate feedback (without using a PC), I decided to add a Adafruit RGB Negative 16x2 LCD+Keypad Kit to show what the program would evaluate:

It takes some soldering and tape depending on the model of Raspberry Pi you have.

Classifying

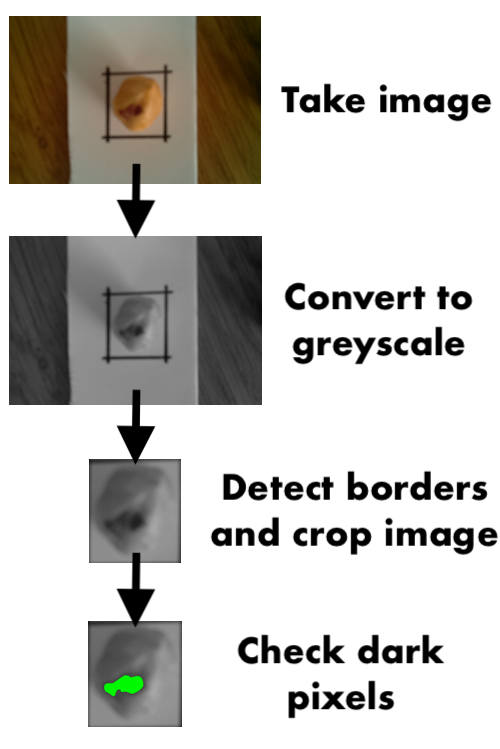

After taking some images and playing around, I decided to go with the simplest possible version: Convert the image to greyscale and then check the number of dark pixels.

At first, the black border is recognized, and then we simply count the number of "dark" pixels using different thresholds to see what the amount of dark spots is on the hazelnut.

I'm sure with some training data a trained CNN would perform much better (and what's more important, more robust to brightness changes), but for a first simple recognition, the above is enough.

Putting it together

Here you can see it in action:

The scripts used are available in this Github Repo. There are also some test images which can be scored to see if the algorithm works as intended.

Thanks for reading!