Searching bookmarks

Ever had the problem that you remember that site, that blog post but you have no idea when or where that was? If you share the same habit of just bookmarking potentially interesting pages, you surely came across the same problem I had last week: Knowing that somewhere in your bookmarks lies that website with the exact thing you're searching for, but being unable to access it.

And maybe you remember vaguely that back then, it took you hours to google that shit and you're not willing to do that once again. That feels extremely frustrating. Especially if you're a chaot like me with zero order in his bookmarks (who's got time for that, seriously? :)).

So I wrote a python script which downloads all my bookmarked pages and turns them full-text searchable. It works as follows:

- Step 1: Export your bookmarks as HTML-file and save it as

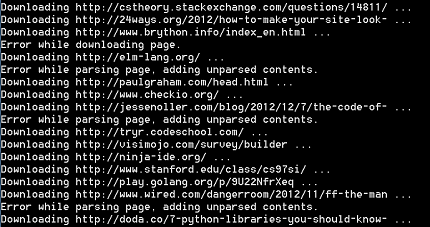

bookmarks.html(the script currently works with Chrome bookmark files. Check here on how to export your bookmarks) - Step 2: Run the script and let it download all your bookmarked pages (this could take a while)

- Step 3: Once downloaded, the information will be pickled (saved) into a file and on the next start, you don't have to download all the pages again. Neat!

- Step 4: Enter search terms and all the pages containing them will be returned.

In my case, downloading 1300+ pages takes indeed a while.

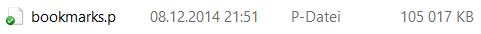

After finishing downloading, it generates a ~100 MB pickle:

This pickle will then be used when the programm is restarted.

Searching some terms:

> Found existing library, reading.

Enter search terms (quit with q): python threads

http://www.brunningonline.net/simon/python/quick-ref2_0.html

http://pypy.org/

http://www.doughellmann.com/PyMOTW/multiprocessing/basics.html

http://stackoverflow.com/questions/2846653/python-multithreading-for-dummies

http://io9.com/5975592/aaron-swartz-died-innocent-++-here-is-the-evidence

http://morepypy.blogspot.de/2012/08/multicore-programming-in-pypy-and.html

http://docs.python.org/2/library/multiprocessing.html

Enter search terms (quit with q): python processes

http://wiki.python.org/moin/PersistenceTools

http://python3porting.com/improving.html

http://python.net/~goodger/projects/pycon/2007/idiomatic/handout.html

http://news.ycombinator.com/item?id=3120380

http://www.doughellmann.com/PyMOTW/multiprocessing/basics.html

http://stackoverflow.com/questions/2846653/python-multithreading-for-dummies

http://morepypy.blogspot.de/2012/08/multicore-programming-in-pypy-and.html

http://docs.python.org/2/library/multiprocessing.html

Enter search terms (quit with q): multiprocessing

http://python3porting.com/improving.html

http://www.doughellmann.com/PyMOTW/multiprocessing/basics.html

http://stackoverflow.com/questions/2846653/python-multithreading-for-dummies

http://morepypy.blogspot.de/2012/08/multicore-programming-in-pypy-and.html

http://docs.python.org/2/library/multiprocessing.htmlCode

Python code (Python 2.7, you'll need html2text):

'''Downloads your bookmarked pages and makes them full-text searchable.'''

__author__ = "Adrianus Kleemans"

__date__ = "December 2014"

import os

import os.path

import pickle

from urllib2 import *

import html2text

import HTMLParser

def download(url):

f = urlopen(url, timeout=5)

data = f.read()

f.close()

charset = f.headers.getparam('charset')

if charset is not None:

try:

udata = data.decode(charset)

data = udata.encode('ascii', 'ignore')

except (UnicodeDecodeError, LookupError):

print 'Error decoding with charset=', charset

return data

def download_library(library_pickle):

library = {}

f = open('bookmarks.html', 'r')

bookmark_file = f.read()

f.close()

bookmarks = bookmark_file.split('A HREF="')

del bookmarks[0]

for i in range(len(bookmarks)):

bookmarks[i] = bookmarks[i].split('"')[0]

print 'Found', len(bookmarks), 'bookmarks.'

# download bookmarked pages

for bookmark in bookmarks:

if bookmark not in library:

print 'Downloading', bookmark[:50], '...'

txt = ''

try:

txt = download(bookmark)

txt = html2text.html2text(txt)

except Exception, e:

print 'Error:', e

library[bookmark] = txt

pickle.dump(library, open(library_pickle, 'wb'))

def main():

library_pickle = 'bookmarks.p'

if os.path.exists(library_pickle):

print 'Found existing library, reading.'

else:

print 'No library found. Downloading, this could take a while...'

download_library(library_pickle)

library = pickle.load(open(library_pickle, 'rb'))

# main search loop

while True:

keywords = raw_input('\nEnter search terms (quit with q): ')

keywords = keywords.split(' ')

if keywords == ['q']: break

for bookmark in list(library):

is_candidate = True

for keyword in keywords:

if keyword not in library[bookmark] and keyword not in bookmark:

is_candidate = False

if is_candidate: print bookmark

print 'Finished.'

if __name__ == "__main__":

main()